Friends,

Which is more important to you? Allowing Pete Hegseth to use artificial intelligence (AI) however he wants, OR preventing AI from doing mass surveillance of Americans and creating lethal weapons without human oversight?

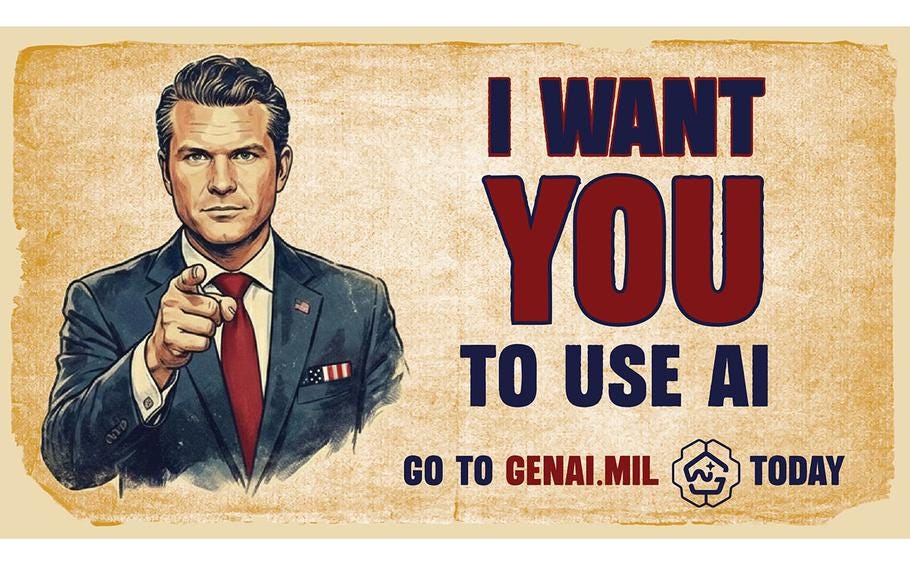

That’s the stark choice posed by the intensifying fight between an AI corporation called Anthropic and Pete Hegseth, Trump’s Secretary of “War.”

AI is dangerous as hell. I view it as one of the four existential crises America now faces — along with climate change, widening inequality, and the destruction of our democracy.

To be sure, AI is capable of changing human life for the better. But if unregulated, it could be a destructive nightmare — giving government the power to know everything about us and suppress all dissent, distorting news and media to the point where no one can distinguish between lies and truth, and threatening human beings with bots that could decide we’re unnecessary obstacles to their taking over the earth.

Now is the time we should be putting guardrails in place. But two forces are making this difficult if not impossible.

The first is corporate greed, which is why OpenAI, Elon Musk’s xAI, and Google have jettisoned all precautions. Several AI researchers have left AI companies in recent weeks, warning that safety and other considerations are being pushed aside as their corporations raise billions of dollars and in preparation for initial public offerings that will make their executives hugely wealthy.

The second is the Trump regime, which doesn’t wants any restrictions on AI — including state government’s. That’s largely because the AI industry has become a powerful force in Washington, throwing money at politicians who’ll do its bidding (including Trump) and against politicians who want guardrails. And because so many Trump officials are corrupt, with their own financial stakes in AI.

Anthropic has been one of the most safety-conscious of all AI companies. It was founded as an AI safety research lab in 2021 after its CEO Dario Amodei and other co-founders left OpenAI, concerned that OpenAI’s ChatGPT wasn’t focused enough on safety.

Amodei has argued that A.I. needs strict guardrails to prevent it from potentially wrecking the world. In 2022, he chose not to release an earlier version of Anthropic’s AI software Claude, fearing it would start a dangerous technology race. In a podcast interview in 2023, he said there was a 10 to 25 percent chance that A.I. could destroy humanity.

In January, Amodei argued in an essay that “using A.I. for domestic mass surveillance and mass propaganda” was “entirely illegitimate,” and that A.I.-automated lethal weapons could greatly increase the risks “of democratic governments turning them against their own people to seize power.” Internally, the company has strict guidelines barring its technology from being used to facilitate violence.

Over the past year Anthropic has battled the Trump regime by pushing for state and federal AI guardrails.

In recent weeks, Hegseth and Amodei have been fighting over the Pentagon’s use of Anthropic’s AI, called Claude. Amodei has stuck to his demands: no surveillance of Americans, and no lethal autonomous weapons lacking human control.

The fight started when Palantir helped the Pentagon capture Venezuelan president Nicolás Maduro. Palantir is a Pentagon contractor that uses Anthropic’s Claude. (Palantir, co-founded by far-right billionaire Peter Thiel and now headed by Alex Karp, is my candidate for the worst corporation in America because it allows governments, militaries, and law enforcement agencies to quickly process and analyze massive amounts of your personal data.)

When top executives at Anthropic asked executives at Palantir if Claude had been used in the Maduro operation, the Palantir execs became alarmed that Anthropic might not be a reliable partner in future Pentagon operations. They contacted the Pentagon and Hegseth.

Last Tuesday, Hegseth issued Anthropic an ultimatum: It must allow the Pentagon to use its AI for any purpose or the Trump regime will invoke the Defense Production Act — forcing Anthropic to let the Pentagon to use Claude while also putting all Anthropic’s government contracts at risk.

The Pentagon already has agreements with Musk’s xAI to use its AI Grok, and is closing in on an agreement with Google to use its own AI model, Gemini. But Anthropic’s Claude is considered a superior product, producing more accurate information.

What’s at stake here? Everything.

Pentagon officials have said that they have the right to use AI however they wish, as long as they use it lawfully.

But because AI has so much political power, Congress and the Trump regime won’t enact laws to prevent it from doing horrendous things. That in effect leaves the responsibility to private AI companies such as Anthropic. Anthropic says it wants to support the government but must ensure that its AI is used in line with what it can “responsibly do.”

Hegseth and the Trump regime have given Anthropic until this Friday at 5 pm to consent to letting the Pentagon use its AI however it wishes or it will simply take it.

Friends, this isn’t just a dispute between two people — Hegseth and Amodei. Nor is it a fight between the Pentagon and a single corporation. The issue goes way beyond this particular controversy. I don’t want to be overly alarmist about it, but the outcome could affect the future of humanity.

What can you do? Call your senators and representatives now, today, and tell them know you don’t want the Defense Department to take Anthropic’s AI technology, and you do want them to enact strict controls on the future uses of AI.

Visit www.congress.gov/members/find-your-member and type your address into the search box. A list of your representatives and their contact information will appear. Or you can call the Capitol switchboard directly at 202-224-3121 to be connected to your members’ office.

As I’ve said before, congressional staffers log every single call that comes into their office in a database that informs the member of the issues their constituents are engaged with, and they use this data to inform their decisions. Staffers answering the phones are trained to talk with constituents, and they do it all day. They won’t be debating you about your position, and are likely to be primarily listening and taking notes.

Please. Today.

This post has been syndicated from Robert Reich, where it was published under this address.